CompareNet

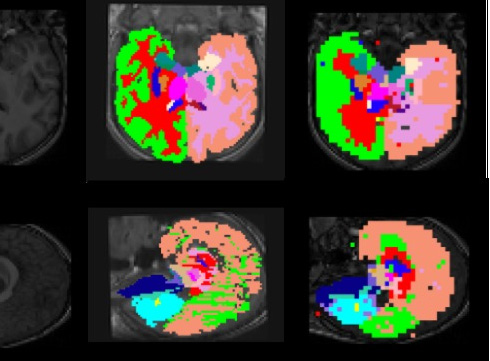

This is the research project I’ve conducted on in UCLA. We designed a new architecture for medical image segmentation. In this project, we proposed a new CNN architecture, CompareNet, that has a pre-labeled volumetric image (atlas) embedded, and the final segment is decided by comparing the atlas and query volumes. Different from all existing studies treating anatomical segmentation as general semantic segmentation problems, the uniqueness of the task was that there are common patterns shared by all volumes of one anatomical part, including the spatial hierarchy between anatomies. Thus, by using atlases to explicitly code patterns in the architecture, we were able to greatly reduce CNN’s complexity.

The paper and code details will be released after the publication.